{{

}}

Conformal Prediction

for Long-Tailed Classification

Joseph Salmon

IMAG, Univ Montpellier, CNRS, Inria, Montpellier, France

Pl@ntnet Consortium

Tiffany Ding

UC Berkeley

Jean-Baptiste Fermanian

Inria

and all the team from

A citizen science platform using machine learning to help people identify plants with their mobile phones

- Website: https://plantnet.org/

- Note: no mushroom identification!

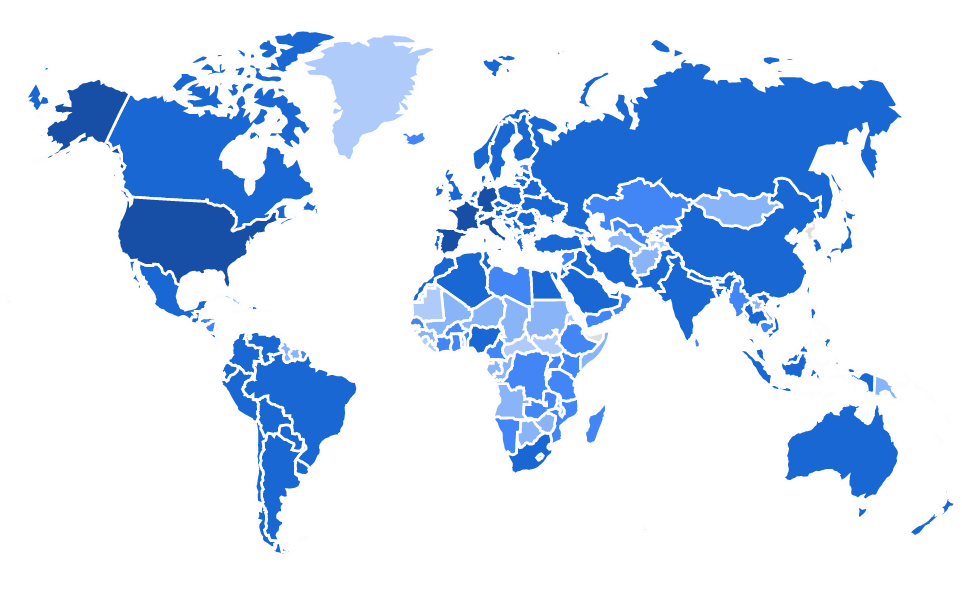

- 25M+ users

- 200+ countries

- Up to 2M image uploaded/day

- 75K+ species (=labels)

- 1B+ total images

- 10M+ labeled / validated

Pl@ntNet-300K dataset (Garcin et al., 2021): a baby Pl@ntNet, available on Zenodo

- ~300,000 images spanning 1,000+ plant species

- Classification setting: \(X_i\) are images, \(Y_i\) are labels=species

- Note the long tail: many rarely collected species (notably endangered one)

Conformal Prediction (Vovk et al., 2005)

\[ \mathcal{C}_{\alpha}(X) = \big\{ y : s(X,y) \geq t_\alpha \big\} \]

Marginal coverage targets:

\[\mathbb{P}\big[ Y \in \mathcal{C}_{\alpha}(X) \big ] \geq 1 - \alpha.\]

Class conditional coverage targets:

\[\forall y,\quad \mathbb{P}\big[ Y \in \mathcal{C}_\alpha(X) | Y=y \big ] \geq 1 - \alpha.\]

Theorem (Informal)

The optimal set of minimum size and marginal coverage of at least \(1-\alpha\) is:

\[ \mathcal{C}_{\alpha}(x) = \left\{ y : p(y|x) \geq t_\alpha \right\} \]

Theorem (Informal)

The optimal set of minimum size and conditional coverage of at least \(1-\alpha\) is:

\[ \mathcal{C}_{\alpha}(x) = \left\{ y : p(y|x) \geq t_\alpha^{y} \right\} \]

Marginal: calibrate \(t_{\alpha}\) on whole calibration set \((X_i, Y_i)_{i=1}^n\)

Conditional: calibrate \(t_{\alpha}^y\) only on \((X_i, Y_i)\) such that \(Y_i = y\)

Targeting Macro-Coverage

\[ \text{MacroCoverage} = \frac{1}{|\mathcal{Y}|} \sum_{y \in \mathcal{Y}} \mathbb{P}\big( y \in \mathcal{C}(X) \, | \, Y = y \big) \]

Theorem (Informal)

The optimal set of minimum size and Macro-Coverage of at least \(1-\alpha\) is:

\[ \mathcal{C}_{\alpha}(x) = \left\{ y : \frac{p(y|x)}{p(y)} \geq t_\alpha \right\} \]